T'was the day before genesis, when all was prepared, geth was in sync, my beacon node paired. Firewalls configured, VLANs galore, hours of preparation meant nothing ignored.

Then all at once everything went awry, the SSD in my system decided to die. My configs were gone, chain data was history, nothing to do but trust in next day delivery.

I found myself designing backups and redundancies. Complicated systems consumed my fantasies. Thinking further I came to realise: worrying about these kinds of failures was quite unwise.

Ereignisse

Die Beacon -Kette verfügt über mehrere Mechanismen, um das Validator -Verhalten zu berechnen, die alle vom aktuellen Status des Netzwerks abhängen. Daher ist es wichtig, diese Fehlerfälle im größeren Kontext zu berücksichtigen, wie andere Validatoren bei der Entscheidung, was und was nicht, die Möglichkeiten zur Sicherung Ihres Knotens (n) scheitern könnte.

Als aktiver Validator nimmt Ihr Gleichgewicht entweder zu oder nimmt ab, es geht nie zur Seite*. Eine ziemlich vernünftige Art, Ihre Gewinne zu maximieren, besteht darin, Ihre Nachteile zu minimieren. Es gibt 3 Möglichkeiten, wie Ihr Gleichgewicht durch die Beacon -Kette reduziert werden kann:

- Strafen werden ausgestellt, wenn Ihr Validator eine ihrer Aufgaben verpasst (z. B. weil er offline ist)

- Inaktivitätslecks werden an Validatoren verteilt, die ihre Aufgaben verpassen, während das Netzwerk nicht abgeschlossen ist (dh wenn Ihr Validator offline in hohem Maße mit anderen Offline -Validatoren korreliert)

- Schrägstriche werden an Validatoren gegeben, die Blöcke oder Bescheinigungen produzieren, die widersprüchlich sind und daher bei einem Angriff verwendet werden können

* Im Durchschnitt kann das Gleichgewicht eines Validators gleich bleiben, aber für eine bestimmte Pflicht werden sie entweder belohnt oder bestraft.

Korrelation

Die Auswirkung eines einzelnen Validators ist in Bezug auf die allgemeine Gesundheit der Beacon -Kette gering. Es wird daher nicht stark bestraft. Wenn dagegen, wenn viele Validatoren offline sind, kann das Gleichgewicht der Offline -Validatoren viel schneller abnehmen.

In ähnlicher Weise ist dies aus der Perspektive der Beacon -Kette, wenn viele Validatoren gleichzeitig schleppbare Aktionen ausführen, von einem Angriff nicht zu unterscheiden. Es wird daher als solches behandelt und 100% des Anteils der beleidigenden Validatoren werden verbrannt.

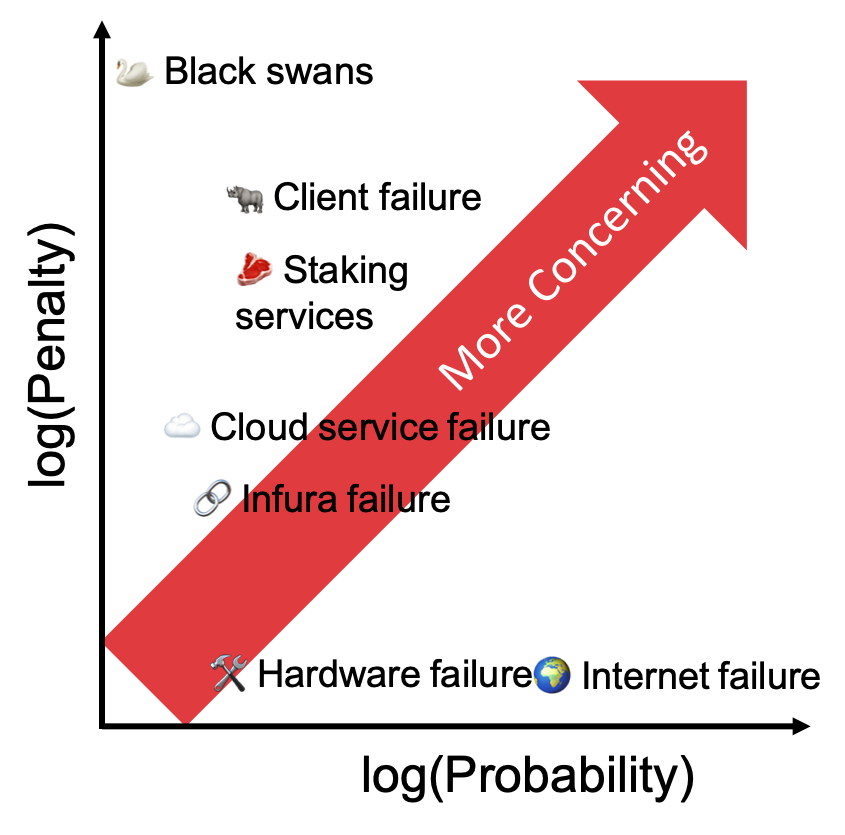

Aufgrund dieser “Anti-Korrelation” -Anreize sollten sich Validatoren Sorgen machen mehr über Misserfolge, die andere gleichzeitig gleichzeitig als isolierte individuelle Probleme beeinflussen könnten.

Ursachen und ihre Wahrscheinlichkeit.

Lassen Sie uns also einige Misserfolgsfälle durchdenken und sie durch die Linse untersuchen, wie viele andere gleichzeitig betroffen sein würden und wie schlecht Ihre Validatoren bestraft werden würden.

Ich bin mit @Econoar nicht einverstanden Hier dass diese sind schlimmster Fall Probleme. Dies sind mäßigere Probleme. Home -Ups und Dual WAN -Adressfehler sind nicht mit anderen Benutzern korreliert und sollten daher weit über Ihre Liste der Bedenken liegen.

🌍 Internet/Stromausfall

Wenn Sie von zu Hause aus validieren, ist es sehr wahrscheinlich, dass Sie irgendwann in der Zukunft auf einen dieser Fehler stoßen. Internet- und Stromverbindungen in Wohngebieten haben keine garantierte Verfügbarkeit. Wenn das Internet jedoch ausfällt oder Ihre Stromversorgung ausfällt, ist der Ausfall normalerweise auf Ihre Region beschränkt und selbst dann nur für einige Stunden.

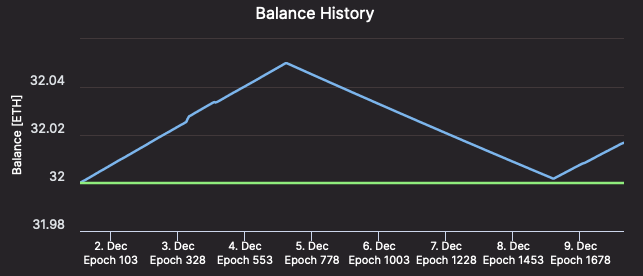

Es sei denn, Sie haben sehr Fleckiges Internet/Strom, es lohnt sich möglicherweise nicht, für Sturzverbindungen zu bezahlen. Sie erhalten ein paar Stunden Strafen, aber da der Rest des Netzwerks normal läuft, entsprechen Ihre Strafen in etwa dem, was Ihre Belohnungen im gleichen Zeitraum gewesen wären. Mit anderen Worten, a k Ein stündlicher Fehler setzt das Gleichgewicht Ihres Validators auf ungefähr dort zurück, wo es war k Stunden vor dem Versagen und in k Zusätzliche Stunden, die der Guthaben Ihres Validators zu seinem Vorbehalt zurückliegt.

[Validator #12661 regaining ETH as quickly as it was lost – Beaconcha.in

🛠 Hardware failure

Like internet failure, hardware failure strikes randomly, and when it does, your node might be down for a few days. It is valuable to consider the expected rewards over the lifetime of the validator versus the cost of redundant hardware. Is the expected value of the failure (the offline penalties times the chance of it happening) greater than the cost of the redundant hardware?

Personally, the chance of failure is low enough and the cost of fully redundant hardware high enough, that it almost certainly isn’t worth it. But then again, I am not a whale 🐳 ; as with any failure scenario, you need to evaluate how this applies to your particular situation.

☁️ Cloud services failure

Maybe, to avoid the risks of hardware or internet failure altogether, you decide to go with a cloud provider. With a cloud provider, you have introduced the risk of correlated failures. The question that matters is, how many other validators are using the same cloud provider as you?

A week before genesis, Amazon AWS had a prolonged outage which affected a large portion of the web. If something similar were to happen now, enough validators would go offline at the same time that the inactivity penalties would kick in.

Even worse, if a cloud provider were to duplicate the VM running your node and accidentally leave the old and the new node running at the same time, you could be slashed (the penalties incurred would be especially bad if this accidental duplication affected many other nodes too).

If you are insistent on relying on a cloud provider, consider switching to a smaller provider. It may end up saving you a lot of ETH.

🥩 Staking Services

There are several staking services on mainnet today with varying degrees of decentralisation, but they all contain an increased risk of correlated failures if you trust them with your ETH. These services are necessary components of the eth2 ecosystem, especially for those with less than 32 ETH or without the technical know-how to stake, but they are architected by humans and therefore imperfect.

If staking pools eventually grow to be as large as eth1 mining pools, then it is conceivable that a bug could cause mass slashings or inactivity penalties for their members.

🔗 Infura Failure

Last month Infura went down for 6 hours causing outages across the Ethereum ecosystem; it is easy to see how this is likely to result in correlated failures for eth2 validators.

In addition, 3rd party eth1 API providers necessarily rate-limit calls to their service: In the past this has caused validators to be unable to produce valid blocks (on the Medalla testnet).

The best solution is to run your own eth1 node: you won’t encounter rate-limiting, it will reduce the likelihood of your failures being correlated, and it will improve the decentralisation of the network as a whole.

Eth2 clients have also started adding the possibility of specifying multiple eth1 nodes. This makes it easy to switch to a backup endpoint, in the event your primary endpoint fails (Lighthouse: –eth1-endpoints, Prysm: PR#8062, Nimbus & Teku will likely add support somewhere in the future).

I highly recommend adding backup API options as cheap/free insurance (EthereumNodes.com shows the free and paid API endpoints and their current status). This is useful whether you are running your own eth1 node or not.

🦏 Failure of a particular eth2 client

Despite all the code review, audits, and rockstar work, all of the eth2 clients have bugs hiding somewhere. Most of them are minor and will be caught before they present a major problem in production, but there is always the chance that the client you choose will go offline or cause you to be slashed. If this were to happen, you would not want to be running a client with > 1/3 of the nodes on the network.

You must strike a tradeoff between what you deem to be the best client vs how popular that client is. Consider reading through the documentation of another client so that if something happens to your node, you know what to expect in terms of installing and configuring a different client.

If you have lots of ETH at stake, it is probably worth running multiple clients each with some of your ETH to avoid putting all your eggs in one basket. Otherwise, Vouch is an interesting offering for multi-node staking infrastructure, and Secret Shared Validators are seeing rapid development.

🦢 Black swans

There are of course many unlikely, unpredictable, yet dangerous scenarios that will always present a risk. Scenarios that lie outside the obvious decisions about your staking set-up. Examples such as Spectre and Meltdown at the hardware level, or kernel bugs such as BleedingTooth hint at some of the hazards that exist across the entire hardware stack. By definition, it is not possible to entirely predict and avoid these problems, instead you generally must react after the fact.

What to worry about

Ultimately this comes down to calculating the expected value E(X) of a given failure: how likely an event is to happen, and what the penalties would be if it did. It is vital to consider these failures in the context of the rest of the eth2 network since the correlation greatly affects the penalties at hand. Comparing the expected cost of a failure to the cost of mitigating it will give you the rational answer as to whether it is worth getting in front of.

No one knows all the ways a node can fail, nor how likely each failure is, but by making individual estimates of the chances of each failure type and mitigating the biggest risks, the “wisdom of the crowd” will prevail and on average the network as a whole will make a good estimate. Furthermore, because of the different risks each validator faces, and the differing estimates of those risks, the failures you did not account for will be caught by others and therefore the degree of correlation will be reduced. Yay decentralisation!

📕 DON’T PANIC

Finally, if something does happen to your node, don’t panic! Even during inactivity leaks, penalties are small on short time scales. Take a few moments to think through what happened and why. Then make a plan of action to fix the problem. Then take a deep breath before you proceed. An extra 5 minutes of penalties is preferable to being slashed because you did something ill-advised in a rush.

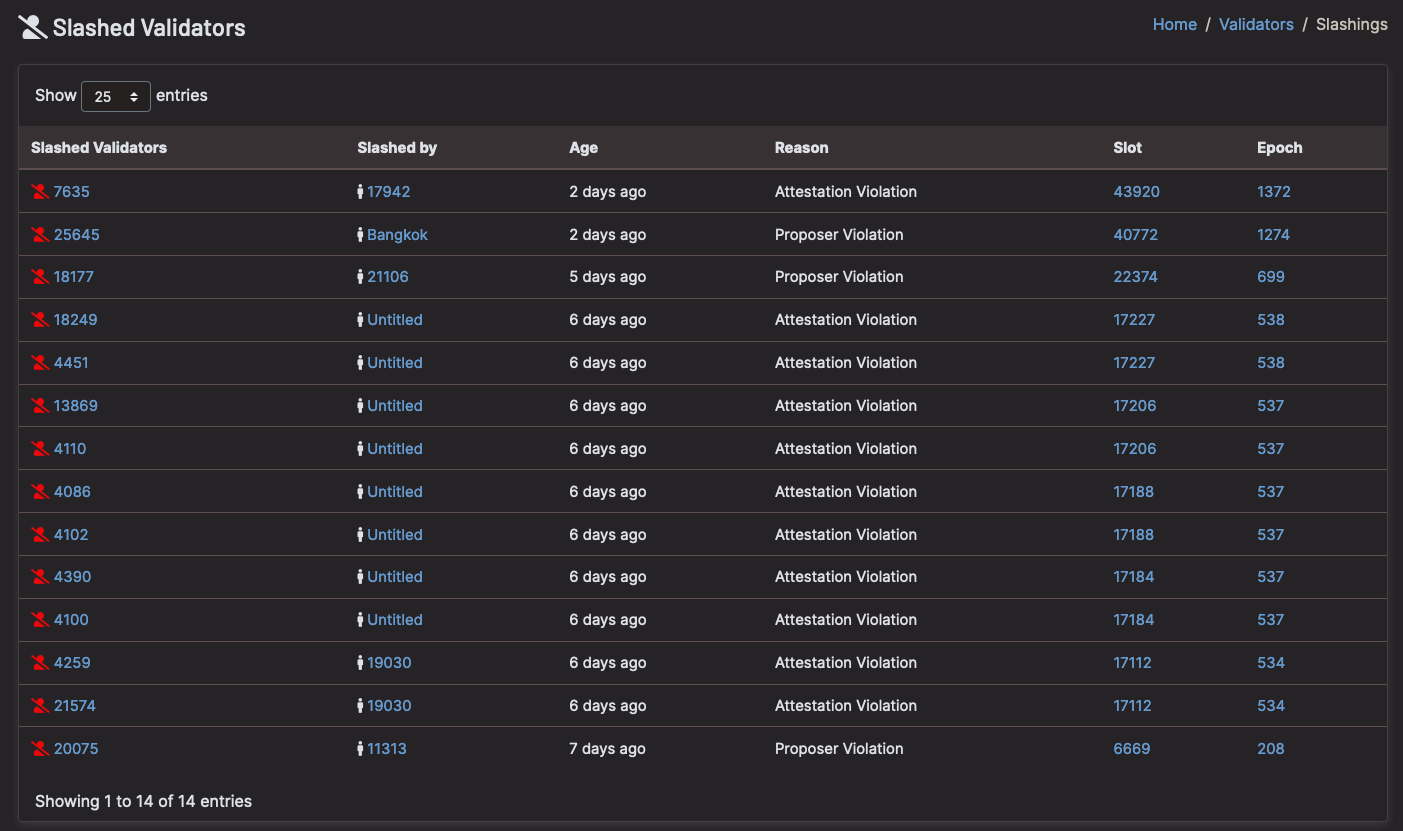

Most of all: 🚨 Do not run 2 nodes with the same validator keys! 🚨

Thanks Danny Ryan, Joseph Schweitzer, and Sacha Yves Saint-Leger for review

[Slashings because validators ran >1 node – Beaconcha.in]